This article is part of “Ready for What,” a special project exploring the role of postsecondary indicators as part of K-12 accountability systems.

A few years ago, Jessica Baghian, the assistant superintendent and chief academic policy officer for the Louisiana Department of Education, was speaking on a panel at the Association for Education Finance and Policy conference. She said she was “riffing on” her frustration over K-12 accountability systems that fail to communicate what happens to students after they graduate from high school.

“It felt like we were missing an opportunity to tell schools more about what happens to students once their kids graduate,” she said.

Some high schools, she added, are “solving different challenges from day one” to get students back on track while others serve a population in which students arrive “three grade levels ahead.”

Yet both types of schools are measured the same way, she said. She wanted to know “more about the impact schools are having on kids whenever they first get there.”

One of the attendees in that session was Brian Gill, the director of the Mid-Atlantic Regional Educational Laboratory and a senior fellow at Mathematica, a research organization. He had in mind a way to correct what he calls “one of the major flaws” of the No Child Left Behind era.

“If you just look at where the students end up, you’re not distinguishing the school’s performance from the population they happen to serve,” he said.

‘Measurable differences’

The result of that chance meeting is a new statistical model that identifies the “promotion power” of Louisiana’s high schools — in other words, the impact of a school on a student’s future success in college or the workforce, minus background factors such as the student’s socioeconomic status and school performance at 8th grade.

“By controlling for student characteristics, the models seek to distinguish the effects of high schools from other factors influencing student outcomes that are outside the control of schools,” Gill and co-authors Jonah Deutsch and Matthew Johnson wrote last month in their report on the work, which was supported with funding from the Walton Family Foundation.

They calculate a school’s promotion power using five measures:

- Four-year high school graduation rate.

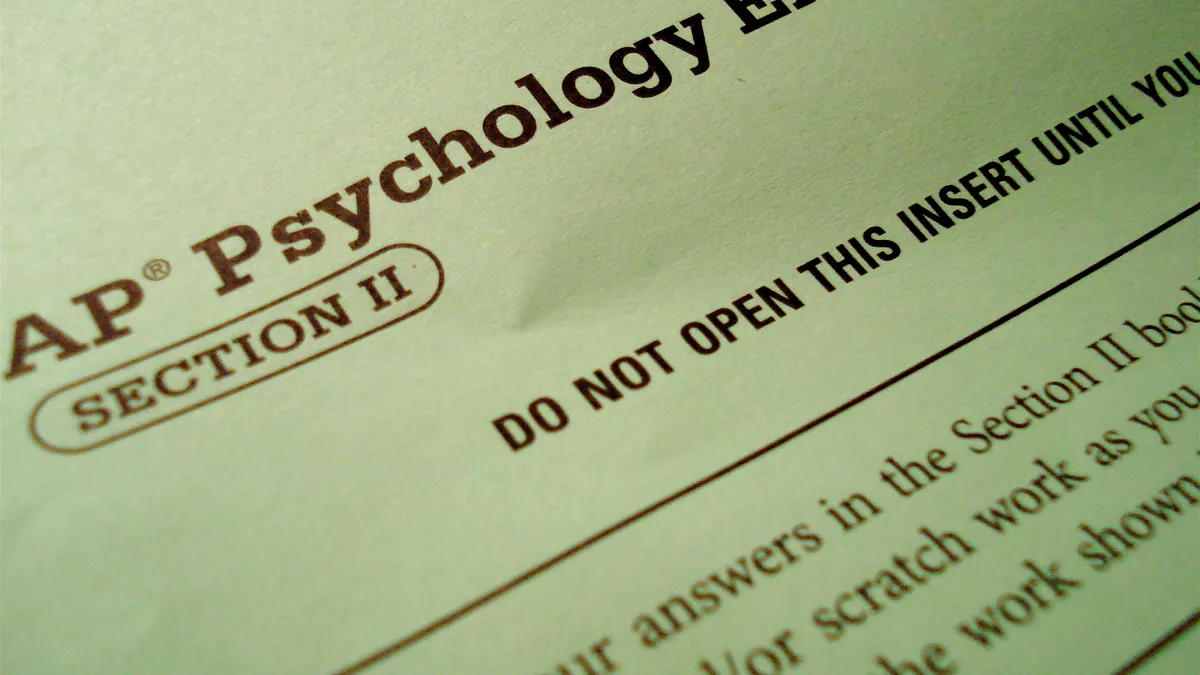

- Completion of a college or career readiness credential, such as an Advanced Placement course or an “industry-valued skill set.”

- Enrollment in a two- or four-year college in the fall following graduation.

- Multiyear college persistence, meaning the student remains enrolled in college for at least four years within a five-year period after high school graduation, with two of those years being at a four-year institution.

- Earnings by age 26, which “provides enough time for students who attended college to complete their undergraduate degree and start working,” the report says.

Baghian, a candidate for state superintendent, described the results of the research as “breathtaking.”

Using data from the state and from the National Student Clearinghouse — a nonprofit that tracks postsecondary outcomes — the researchers found a student moving from an average high school to one in the 95th percentile on each of those five indicators would be significantly more successful.

The odds of graduating high school in four years, for example, increase by 14 percentage points, and earnings at age 26 increase by more than $5,500.

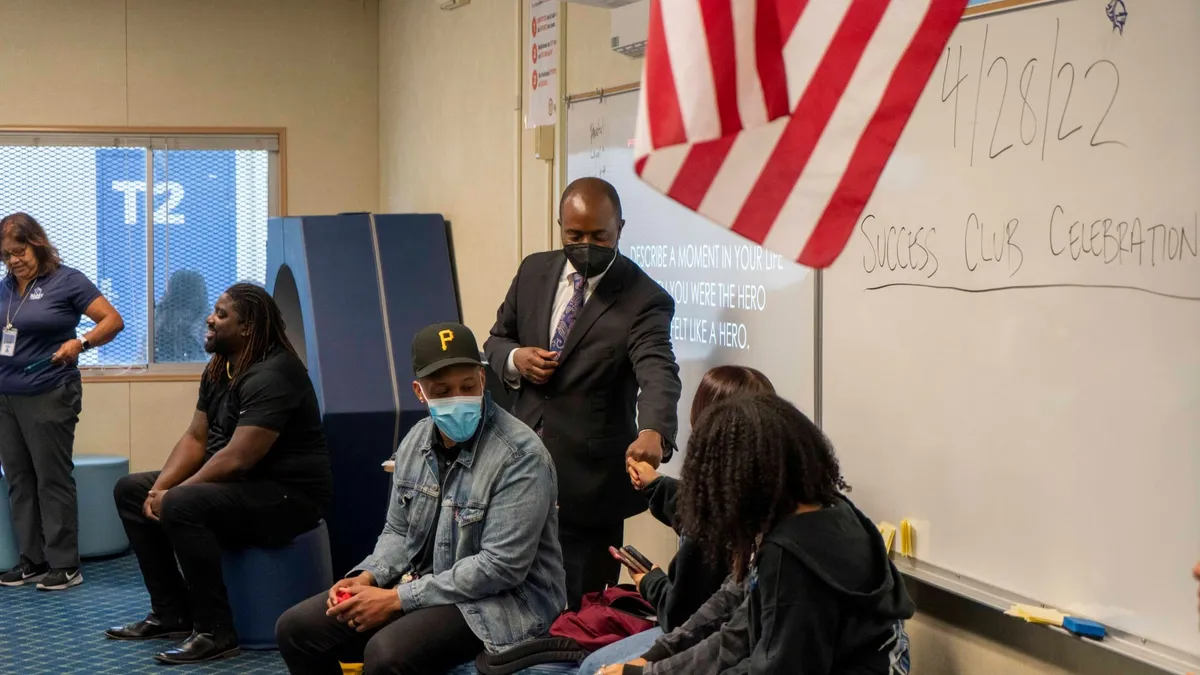

“There are really measurable differences happening there,” Baghian said, adding there’s “a lot to learn about the practices and protocols of those 95th percentile schools.”

Measuring growth in high school

Does that mean Louisiana educators and parents will soon be able to compare their high schools’ power to promote or that a school’s results will appear on the annual report card? Baghain said the model is still being tested, and how to use the data is still being discussed.

But officials want to at least begin conducting some case studies of 95th percentile schools, and then if the model is sustainable, she added, they’d like to collect the data annually. “We have to find a way to responsibly share it with educators more broadly to encourage the spread of those best practices,” she said.

The Office of the State Superintendent of Education in the District of Columbia — as part of a federal research project with REL Mid-Atlantic — is also at the beginning stages of working with Mathematica to gather similar data.

“They were generally interested in thinking about how to refine accountability measures and thinking particularly about how to include more growth measures for high schools,” Gill explained.

But Gill adds there will be a few differences in the D.C. project. The promotion power measures won’t include workforce data, but unlike the model in Louisiana, it will include SAT scores. The D.C. Public Schools is among the growing number of districts and schools that make the SAT available to all students at school.

“That’s going to be interesting,” Gill said, “because we’ll be able to see how well a school’s contribution to SAT scores correlates with its contributions to graduation and college entry.”

In addition to identifying schools that perform well even though they serve students from low-income families, the model can also “flag” schools with mediocre results even though they enroll students from advantaged backgrounds.

Other outcomes could also be added — such as voting and voter registration, Gill said, arguing if having educated citizens for a democracy was the original purpose of public education, it makes sense to measure “civic participation.”

The problem of missing data

The research is even more important now, Baghian said, because of the unknowns about how the pandemic and school closures will affect those “walking across the virtual stage.” She added there is the risk of more students falling “into the category of opportunity youth.”

There are already signs the pandemic and its economic fallout have changed graduating seniors’ college plans, which could affect those other long-term outcomes as well. In a recent survey of 1,000 teens, for example, 44% of high school juniors and seniors say the crisis has impacted their plans to pay for college, and 30% of those whose post-high school plans have changed say they will have to put off starting college.

Gill doesn’t know whether the model will spread to other states in the mid-Atlantic region — Delaware, Maryland, New Jersey and Pennsylvania. But he added that because state testing has been suspended for this year, there could be wider interest in finding other ways to measure school effectiveness.

“Every state is going to have to be doing something different in its accountability regime over the next two to three years,” he said. “There is a sense in which these kinds of measures can be, in part, a solution to the problem of missing test data.”