To Richard Culatta, students need to learn about artificial intelligence and its inner workings now. But even more critical, says the CEO of the International Society for Technology in Education, is that educators teach pupils not just about the technology, but how to partner with AI in their own work.

“Every kid that graduates today is going to work on a team where not every member of their team is human,” Culatta says. “How do you work on a team where not all members are human? How do you know what tasks to hand off to non-human counterparts?”

As AI populates our everyday lives — from being woven into search engines to suggesting which social media accounts to follow — so too is the technology working its way into classrooms.

Whether AI appears in student work, in assessment and lesson design, or in building a lunchroom schedule, futurists are fairly universal in their opinion that AI isn’t going away. Instead, this is a technology that won’t just assist us but will serve as a collaborator.

Culatta says that’s something everyone — especially today’s students — will need to learn to expect. “What type of human do we need to be preparing for the world they’re going to be walking into?”

So how can educators prepare today’s learners for a future working alongside AI? Education and science experts say there are several steps that can be taken within curriculum today to help students prepare for tomorrow.

Help students understand best uses

Schools should think about how to help prepare students to be good humans within an AI-driven world, says Culatta. Aside from knowing how to use AI, students also need to be ready for a world where that technology dominates some skills humans control now.

That may mean focusing on helping them understand the skills AI can handle best versus those that are uniquely human, such as creativity and empathy.

Culatta says rather than teaching students skills that will likely be handed over to AI, they should focus on how to be comfortable handing those tasks over and strengthening the abilities that the technology can’t substitute.

Ultimately, Culatta asks, can students know when it's better to hand something to AI or know when a human would be better-suited to the task, and understand how to know that?

Rethink assessments

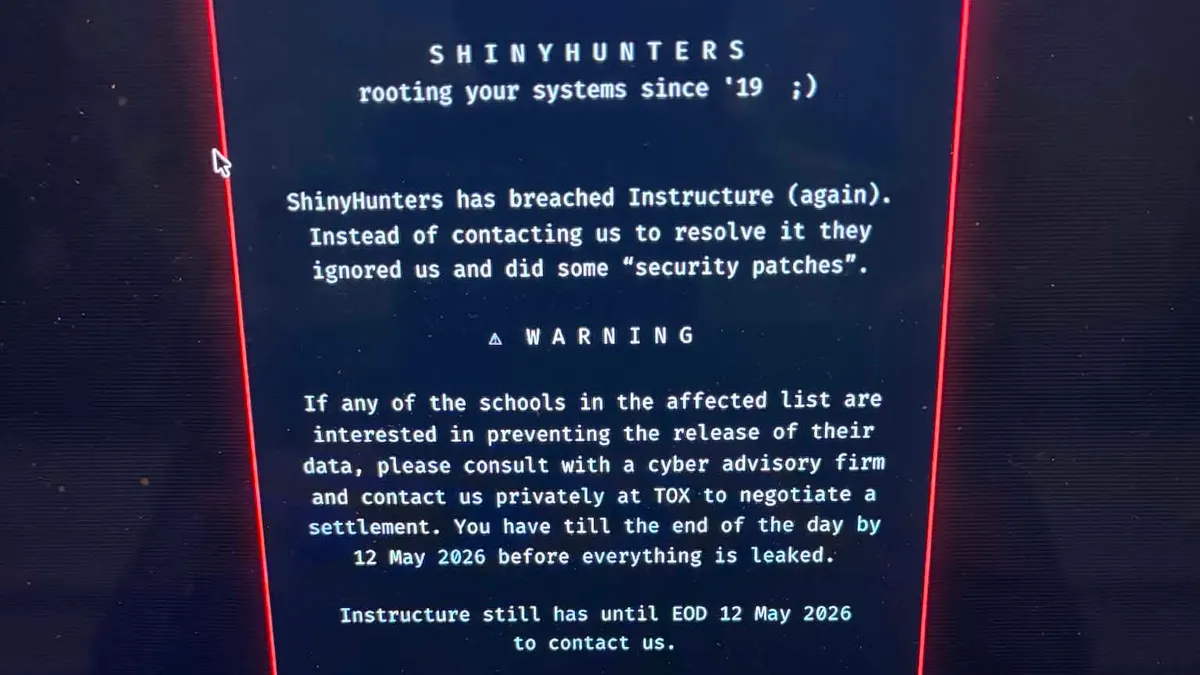

To teach students how to work with and trust AI, schools also need to look at their assessments. Culatta says ISTE gets calls that schools are worried AI is contributing to increases in cheating.

“There’s no evidence that cheating has increased in schools,” he says.

Instead, he says, more attention should go to rethinking tests that were designed to ask students to cut and paste answers, popping in a definition or deriving a numeric solution to a formula.

Better options include asking students to use AI to come up with six possible solutions to a problem, and then assess the flaws and benefits of each.

“That’s a skill we need for the future,” Culatta says. “It’s critical thinking. They have to know something about the solutions, whether they are good or bad.”

Pete Just, executive director of the Indiana CTO Council, concurs that schools need to shift their thinking away from whether students will or won’t use AI — or even if that’s called cheating — and instead teach them how to use the technology with “academic integrity.”

“It’s acknowledging that you may use it, and if you do, here’s what we expect of you,” Just says.

Provide teachers PD for AI

Knowing how to use AI effectively and to teach that thought process requires educators to have some comfort level with the technology.

While there are always early adopters within any field, using AI through Bing’s search engine or even through an Open AI subscription is not the same as knowing how to weave the tool into curriculum.

The Consortium for School Networking's AI Exploration for Educators has been working with New York City Public Schools and thousands of educators to help teachers think differently about AI, says Keith Krueger, CEO of CoSN. That includes the development of the K-12 Generative Artificial Intelligence Readiness Checklist. One of the first starting points Krueger notes is for educators to start using AI with tasks they need to handle in their classrooms.

“One of the themes is to play with it,” Krueger says.

Support around AI is critical, adds Just, who notes that teachers are not getting much professional development yet around the tech. While 5% to 10% of educators are using the tool on their own, he says, the rest need some assistance to get comfortable with how and where to use AI.

“Teachers and administrators should be using it to handle administrative tasks,” Just says. “That’s an easy way to dip into the waters, then move forward on how to import this into classrooms.”

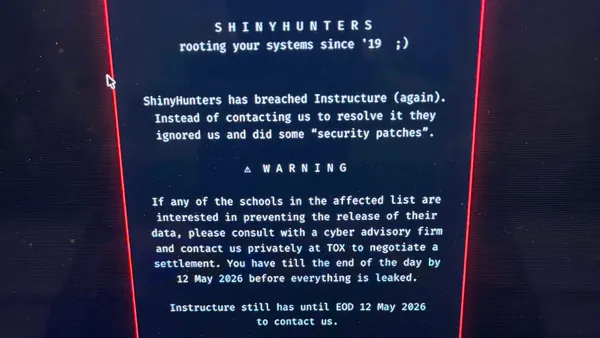

But Just says not everything should be handed over to AI right now. For example, educators may eventually want to be able to chart how students are doing academically or design realistic projections for student growth in subject areas. But putting student data into open AI spaces, like Open AI today, can run into privacy issues with student data.

For educators to understand these nuances, they first need to learn how to get comfortable with using AI and how it works before knowing what they can currently do and what needs to wait.

“We start with teacher professional development and staff professional development, helping them understand the issues around ethics and student privacy and even practical pedagogical uses of AI,” Just says.

Install guardrails

According to Just, paid services or software license agreements will begin to appear in schools and districts to ensure AI has more guardrails around student data. He says that if schools and districts aren’t paying for K12-specific tools for AI yet, they eventually will.

Krueger agrees. He expects to see more “walled-garden" or district-specific AI solutions soon to allow educators to use the technology to design more student-focused lessons — even those tailored to a specific pupil. Until then, as educators start using AI, they should continue to be mindful of the student information they enter.

“We encourage teachers and administrators to use AI to solve problems they are trying to solve, but with the understanding that if you’re putting something into AI, be careful not to load IEPs [individualized education programs] into AI,” Krueger says.

While caution is important, experts agree that educators do need to start using AI — mainly because their students already are. Even more keenly, learners will use AI in ways we have yet to imagine. To Culatta, educators who focus on how to not use AI hold students back from developing skills around a technology that will be critical to their future.

“We can say what not to do all day long, but you can’t practice not doing something. And if you want good skills, you have to practice doing something,” Culatta says. “The skills we’re teaching in schools are not aligned with the skills needed to thrive in the digital world. And that’s a huge mess we have to fix in schools.”